|

Quicksearch

Calendar

|

Wednesday, December 22. 2010

ISP Popularity by Domain Count Posted by Ilia Alshanetsky

in PHP, Stuff at

12:06

Comments (2) Trackback (1) ISP Popularity by Domain Count

The past two information gathering runs showed that GoDaddy is the world largest ISP, but I was curious who else falls into the “Top ISP” category as determined by consumer shopping habits. To do this I’ve used my resolved IP database of 124 million domains and an ISP database from MaxMind.

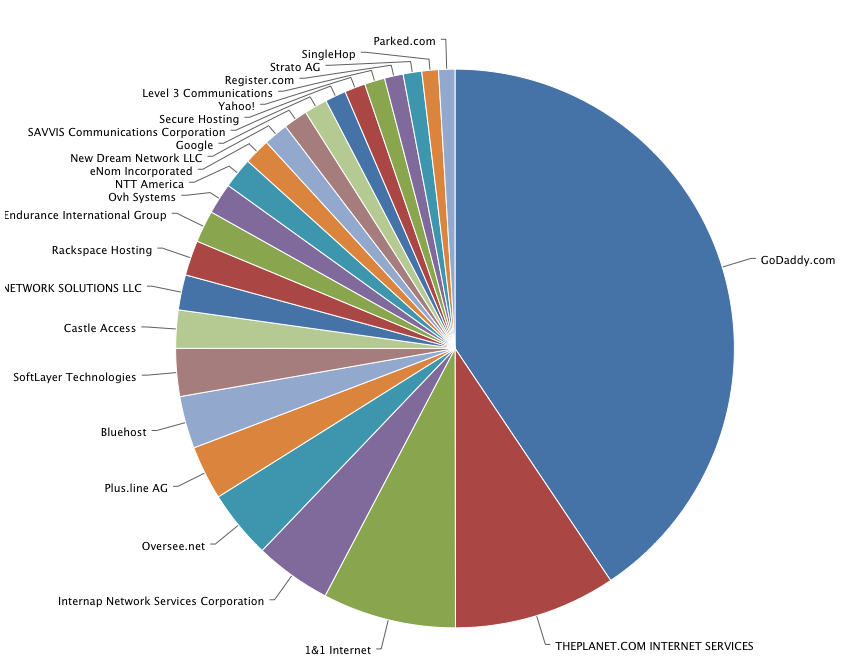

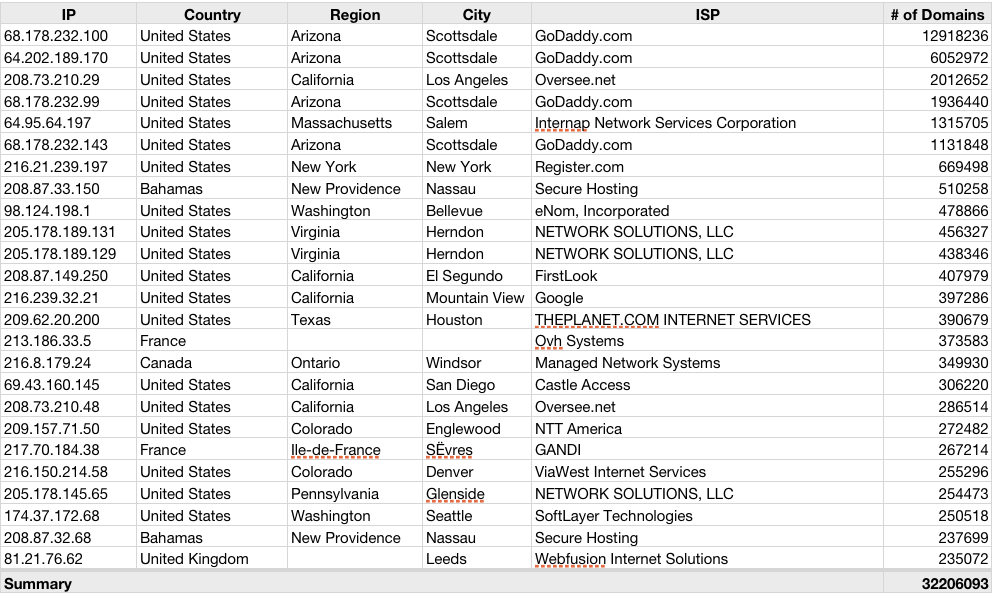

The results are pretty interesting, and it clearly shows that a small number of ISPs are definitely doing something right, which is causing many consumers to vote with their dollars in those ISPs favor. As usual the information is shown in graph form, to filter down the data to just the large providers I’ve set a minimum at 100,000 domains, leaving me with just 122 ISPs. The image below shows the break-down of the Top 25. If you click on it, you will be able expand the chart to 50,100 or the entire list in a form of a bar chart, or explore the pie chart that includes %s.  Since we already know GoDaddy is #1, I will skip them over them and focus on the next largest 3. At #2, we have The Planet, a Texas based data-center provider with 6.1 million domains (4.91% of total). It should mention that The Planet had recently merged with Softlayer Technologies, #8 on our list, which would further expand their prominence by another 1.8 million domains. At #3 we have 1&1 Internet, a German ISP with 5 million domains (4.04%). And finally at #4 we have Internap, an Atlanta based ISP with 2.88 million domains (2.32%). In total, the 25 largest ISPs represent 52.3% of all the domains, which quite impressive since overall there are 31,173 distinct ISPs. Even within the top 25 things are not entirely even, only the first 15 can claim over 1 million domains. Expanding the ISP list to Top-50, expands the domain coverage to 59.67%, not a whole lot. However once the entire list of ISPs with >100k domains is considered we are looking at 68.9% representation. This means that 2/3 of the entire internet is effectively managed by only 122 companies. Kinda scary, actually. In an effort to validate the popularity of the ISPs, I’ve also decided to look at the IP address breakdown (how many IPs per domain) and which ISPs the Top-IPs belong to. This should identify the ISPs whose business is predominantly the result of parked domains. The results for Top 25 IP addresses are shown in the table below  When it comes to IP addresses, GoDaddy is by far the most frugal, which probably relates to the fact that they do a lot of domain parking. Both #1 and #2 on the list belong to GoDaddy, representing 12.9 million and 6 million domains respectively. I should mention that #4 with 1.9 million domains and #6 with another 1.1 million domains also belong to GoDaddy. Based on this information it would seem that GoDaddy’s prominence in the ISP space is predominantly based on parked domains, which take up 22 million of the 26.4 million being hosted with them. This can probably be attributed to GoDaddy’s extremely aggressive pricing when it comes to buying domains. The 3rd most popular IP address belong to Oversee.net, with just over 2 million IPs. Given that Oversee.net only has 2.57 million domain under management, it would seem that most of them are parked domains, same situation as with GoDaddy. Couple of other big IPs worth noting are 64.95.64.197, which belongs to Internap, with 1.31 million domains resolving to it. Given that Internap hosts 2.88 million domains, it seems just shy of 1/2 of their domain base is parked. Another “winner” is 216.21.239.197 which belongs to Register.com with 669k domains, which represents nearly the entirety of of the 703k domains at that ISP. Unsurprisingly Network Solutions is in the same boat, of the 1.32 million domains under management, 1.15 are parked on 3 IP addresses. On a general note, the 124 million domains are hosted on just 5.39 million IP addresses, which raises interesting questions about IPv4 exhaustion, clearly domain hosting is not the reason. So what the heck are people using those public IPs for? If they are being used by Internet Providers to provide internet to consumers, one would think that more extensive use of NAT would certainly alleviate the IPv4 shortage for quite some time. There are some 81,164 IPs that have over 100 domains resolve to them, if combined, their total domain count reached 87.4 million, 70% of all registered domains. Since It is safe to assume that in most cases virtual hosting won’t have more then 200 sites per IP, than based on the gathered data, 81.4 million domains are parked on 37,865 IPs. This in turn means that 2/3 (65.4%) of all domains are not actually in-use. A number very similar to the % of domains hosted the world’s Top ISPs, so perhaps the things are not so bad after-all and the non-parked domains are more evenly distributed than the initial numbers might suggest. Tuesday, December 21. 2010

Domain Distribution by City Posted by Ilia Alshanetsky

in PHP, Stuff at

08:04

Comments (4) Trackback (1) Domain Distribution by City

As part of my on-going domain informatics coverage, I am now publishing some additional information that I’ve been able to gather in the last few days.

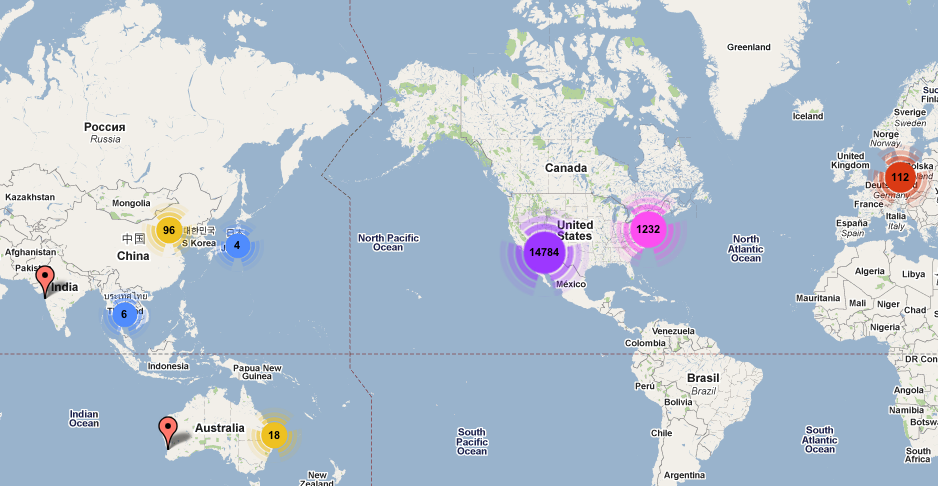

I am making available two additional geographic chats that breakdown the domain distribution by top world cities. The first chart a preview of which can been below (click to see full, browse-able/zoomable version) shows the Top 150 cities, by domain distribution. These cities represent a total 91.3% of some 102 million domains that could be resolved to a city level.  The most popular city in the world, with 26.4 million domains calling it home is Scottsdale, Arizona in United States. Which is not entirely surprising, given that it is the hometown on GoDaddy, world’s largest domain provider/hosting company. This coincidentally means that Arizona, which had won the US state domain count contest with 26.7 million domains in our previous round of statistics, is entirely due to GoDaddy. The 2nd largest city is San Francisco, with a respectable 14.3 million domains, which represents over 1/2 of the 24.3 million domains hosted in California. And the 3rd place goes to Houston, Texas with just shy of 6.1 million domains. Overall there are only 15 cities in the world that can claim to host over a 1 million domains and all of them are found in the US. The first non-US city is actually Toronto, Canada at #19 with 850 thousand domains, shortly followed by Beijing China with 845 thousand domains. The smallest city on the Top 150 list is Oklahoma (Capital of Oklahoma, US) with just 43.4 thousand domains. Seems tiny compared to the beginning of the list, but on global country scale it would actually make it into Top 50 at 49, with almost twice as many domains as Iran (23.4 thousand domains) that would now be displaced to 50th place. To give a slightly better domain concentration view, I am publishing a dynamic cluster map that groups cities together by geographic proximities. Zooming in on the map will breakdown the larger clusters into smaller eventually, eventually resolving all the way to the individual cities. Due to better visualization of markers on this map, I’ve expanded the city list to the Top 400 cities. Which takes us down to as little as 8,600 domains and provides a slightly more worldly view. Click on the map below to see the dynamic version.

Sunday, December 19. 2010

Domain Location Statistics Posted by Ilia Alshanetsky

in PHP, Stuff at

16:51

Comments (9) Trackback (1) Domain Location Statistics

I recently re-started the process or aggregating PHP usage data and first sample of small dataset (about 10 million domains) has been the subject of my PHP Advent article. Now, I've started the process of collecting the data on the full data set which comprises of 124 million domains that represent the entirety of .com, .net, .biz, .info, .us, .sk and .org TLDs.

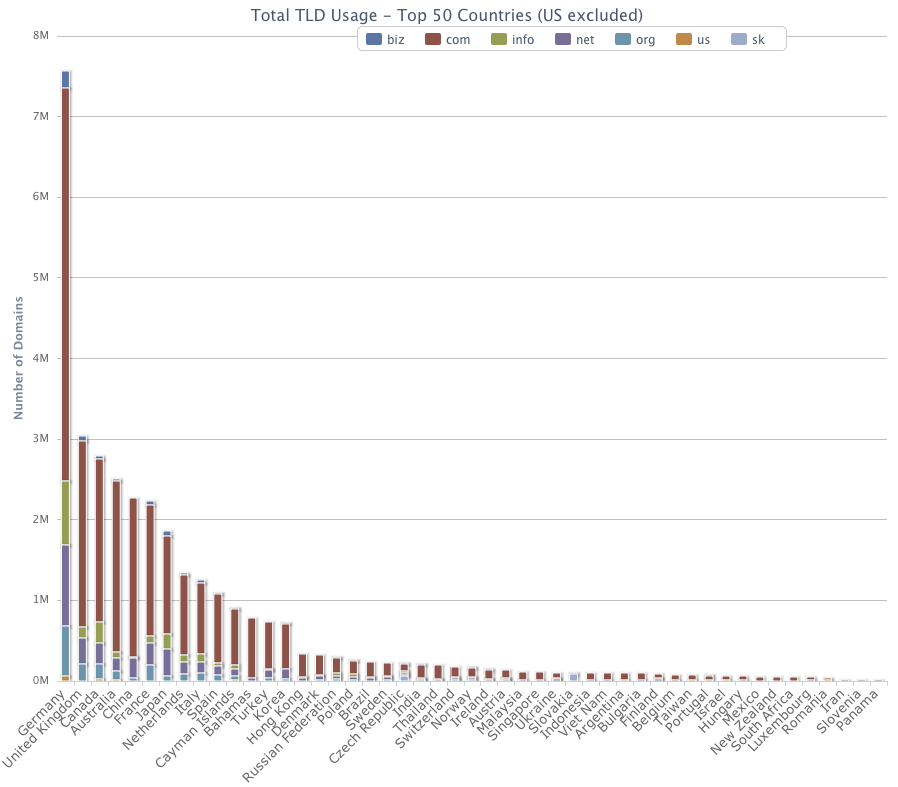

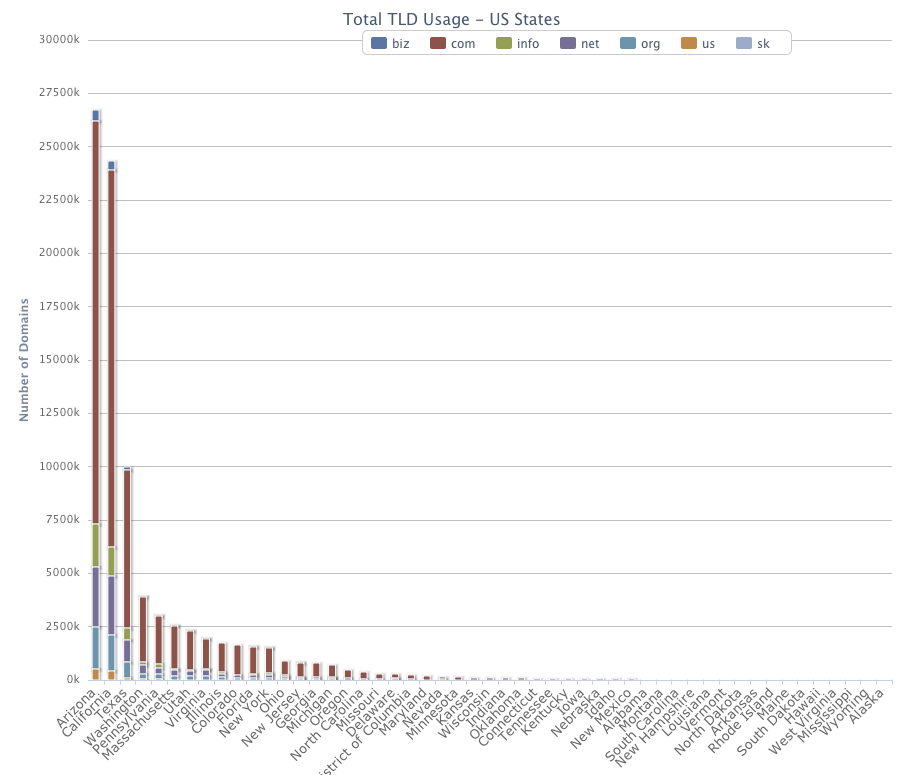

The first step of the process has been resolving all of these domains, which is now complete. The next step is fetching the server information, which began, but will take some time to finish. However, even from the domain revolving data there is a lot of useful data to be gleamed, which is what I am now publishing. My first focus was on the world-wide distribution on these TLDs, which at least for me held a few surprises. First of all, unsurprisingly US has the most domains hosted on its soil, however its sheer overwhelming of other countries is quite impressive, and stands at just over 89 million domains, 71% of all domains!. In 2nd place is Germany (which was actually a surprise, my guess that it would be Canada or Japan) with 7.5 million domains and the bronze goes to United Kingdom with 3 million domains. Canada was actually 4th with 2.7 million domains and Japan all the way in 9th place with 1.86 million domains. Below is a graph representing the top 50 Countries according to the domain distribution. To see more detail, click on the image which will bring up a zoomable HighChart graph.  Note that the US excluded, due to the high number of domains it made the graph not usable and unfortunately HighCharts library does not supposer logarithmic scale. What I did instead is a create a separate chart, which breaks down the domain distribution by US states, which can be found further in the article. As you can tell from the World chart, the domain distribution is highly un-even, the top entry starts with Germany at 7.5 million and by the time hit Spain at #12, we are down to 1 million domains. By the time we get to Bulgaria that is in 37th place were are down to just 100k domains. By the time last entry in our chart appears, which happens to be Panama, we are down to a mere 21,709 domains. As with the world data, US domain distribution also had a surprise in store for me. I would've guessed that the majority of domains would find their home in California, being itself the home of Silicon Valley. However, California actually came in second, behind Arizona of all states. Arizona is home to 26.7 million domains, while California can only lay claim to 24,.3 million, Texas came in 3rd with a mere 9.99 million domains. Below is the chart showing all the states, click on the image which will bring up a zoomable HighChart graph.  As with the world stats, the US domain distribution is also extremely uneven, only 12 states can lay claim to being home to over a million domains. The 12th being state, being New York with 1.56 million domains. By the the time we reach Indiana at #27, we are down to just 100k domains. Alaska trails the list at a mere 4729 domains, which seems tiny, however it still more more then 130 countries, which on a world scale would place at at #71, ahead of Iceland an Saudi Arabia, that both have just over 4100 domains. I've also created a clickable world-map that will let you explore all 222 countries, US and Canada are clickable and will give you breakdown of the individual states/provinces. While the sever information script is gathering data, which should take about 2 weeks I am hoping to generate more data based on the IPs, which should give ISP popularity, avg. # of domains per single IP with info on the "top IPs" and other curious bits of info I can gleam from free/public MaxMind I am using. P.S. If you have access to TLDs other then the ones I listed, I would be grateful if you would be willing to provide domain lists for those TLDs on a one-time or monthly basis. Wednesday, June 10. 2009Ontario Monitor Tax

I was buying 2 monitors for the office today, when a came to paying the bill I discovered a rather nasty surprise. It seems that the Ontario government has decided to apply a $12.03 per monitor recycling tax as part of their EOS program. It looks like our liberal provincial government is always on a look out to grab more of our money, so they can spend it on idiotic initiatives such as eHealth.

Tuesday, April 14. 20095 Letter Domain (ia.gd)

After reading about rev="canonical" and all the hoopla about domain shorteners, I've decided to see how short of a domain I could get for my blog to avoid having people needing to use things like tinyurl etc... to reduce the lengths of URLs. The goal was to get a 2 letter domain with a 2 letter extension, an easy solution seemed to be the .me extension (Montenegro), but alas I could not find a single registrar that would allow a 2 letter domain registration (ia or ai, my initials). It seems most registrars or resellers use generic libraries that no matter what disallow registration of domains <3 characters in length. The reason for this being that most (but not all) registries typically reserve 1 and 2 letter domains. So even when the domains are available, you cannot register them. Fortunately, after some whining on twitter, someone from iWantMyName did some targeted marketing at me

The end result, I am now a happy owner of ia.gd (.gd being the extension for Grenada), it was not particularly cheap $49 as compared to GoDaddy where a common extension can be had for $7-$9 depending on a promotion at the time. However, GoDaddy's domain search system blocks 2 letter domains en-mass, even if they are allowed, so GoDaddy's #fail is iWantMyName #win. Wednesday, March 25. 2009IE8 X-UA-Compatible Rant

A few days ago, Microsoft had done a low-key launch of IE8, which means you need to make your applications compatible with 3 differently broken version of IE ;-P. The biggest challenge IE8 poses is that it runs in "strict" mode by default, which coincidentally breaks all of the previous IE6/7 hacks that had to put in place to make CSS and Javascript render in IE the same way they do in other browsers.

Fortunately, unless you wish to refractor your entire code to support IE8 strictness, MS did add a "compatibility" switch, via the X-UA-Compatible meta-tag or header, if you change its value to "IE=EmulateIE7" it makes it emulate the "strict" mode ala IE7, which at least in all of our code makes it render things properly. However, there is a "slight" problem, which I discovered while trying to implement this function. According to the docs, you can trigger this behavior via the following meta tag: CODE: <meta http-equiv="X-UA-Compatible" content="IE=EmulateIE7" /> , unfortunately it didn't work and according to the IE8's developer tool the default strict mode of IE8 was still being used. No matter the value, be it IE=IE7 or IE5 or EmulateIE7, it would just be ignored. Eventually, I realized that perhaps the DTD is the problem, our pages use XHTML/Transitional DTD, (CODE: <!DOCTYPE html PUBLIC ), which means custom meta tags are effectively spec violations. Which, as far as I can tell IE8 in it's strict mode happily ignores, Catch 22!. This is despite a nice note inside MSDN Blog that says:"-//W3C//DTD XHTML 1.0 Transitional//EN" "http://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd"> CODE: NOTE: The X-UA-Compatible tag and header override any existing DOCTYPE. The solution, is to set the X-UA-Compatible value via an HTTP header ala: PHP: This means the parameter is outside of HTML and will get properly captured by IE, in our case that solved all of the JS and CSS issues that appeared in IE8. For those of you using ExtJS date picker, this does solve the cut-off of the calendar window. Saturday, March 7. 2009I'm on Twitter

At the PHP Quebec conference I've succumbed to peer pressure and decided to sign-up for twitter. So, for those of you interested in following me (damn stalkers

Sunday, January 25. 2009

"How to Increase Fuel Mileage ... Posted by Ilia Alshanetsky

in Stuff at

14:18

Comments (7) Trackbacks (0) "How to Increase Fuel Mileage on a Car" - According to WikiHow

In a moment of boredom, yesterday I happen to browse to WikiHow. At the bottom of their site I noticed a rather curios button, advertising that their site is "Carbon Neutral", since this was the first time I've ever seen something like that I was naturally curious, so I clicked the link to learn more. This took me a short article giving a pseudo-scientific calculation of how much carbon is consumed by their site, down to their share of train travel. If you are curious you can find the breakdown here wikiHow:Carbon Neutral. One curios thing I noted was "Jack riding bike to work: 0 lbs of carbon!", last time I checked strenuous physical activity, increases heart rate, which in turn causes the person breathe-in more oxygen and subsequently expel more carbon dioxide. This means that if Jack drove a car, rode a bus or train, he'd actually contribute less carbon to the environment and leave more oxygen for the rest of us

Anyhow, on that page they also had a reference on how/where you can buy "Carbon Credits" to offset impact on the environment, allowing you to equalize your environmental karmic balance or something, what will they come up with next! And best of all it can be done in 7 easy steps, which sounds simple enough for me to follow and actually remember. Step #1 starts with identifying sources of carbon dioxide production in your daily life, ok sounds simple enough. Let's see; mammals those filthy carbon producing critters, need less of'em; gotta eat more of them to save the environment, especially those endangered ones like Tigers and Pandas... mmm... Panda stew... **drool**. Oops, sorry got distracted there for a second... Definitely gotta eat less plants, they actually scrub carbon dioxide and therefor are carbon negative, so no more salad, ok, can do. To all you vegetarians, quit killing the environment by your uncontrolled destruction on carbon reducing greenery and start saving the environment by eating Rhinos, Gazelles, red wolves and spider monkeys, especially the spider monkeys! Step #2 involved cars, first they suggested that I drive less, well that's not gonna happen. Then they tried to wean me off driving entirely, fat chance! You gotta wonder why would they say I should drive less and when I say NO WAY IN HELL, they actually have the audacity to try and convince me to stop driving entirely, completely illogical I tell ya! But, they also had a good suggestion of improving your car's fuel efficiency, which immediately got me interested, I mean save money on expensive gas and save the environment, JACKPOT! So how is that actually done? Apparently there are 26 steps, holy cow! That's like 25 too many for me, but I persevered and read them all anyway, there is nothing I wouldn't do to save mon^H^H^H the environment. The best ones I have decided to share with you all, so you can too do you part to save the environment and for your convenience I've simplified it to 5 easy for consumption ideas, so you don't have to suffer through all 26. 1) Lighten your load. An extra 100 pounds increases fuel consumption by 1-2%. Roger that, no more driving other people or car pooling, those fat bastards can drive their own cars! Making me damage the environment by reducing my fuel efficiency, some people just have no respect for the environment I tell ya. 2) Avoid braking wherever possible. Braking wastes energy from fuel that you have already burned. Good idea, you should start drifting corners, do engine breaking and run lights whenever possible to avoid slowing down and loosing precious inertia & kinetic energy. 3) Tune up your engine. A properly tuned engine maximizes power and can greatly enhance fuel efficiency. Wow, I didn't know that tuning the engine would make it use less fuel. Go and have two stage turbo installed on your car immediately, and get another 300 bhp of environment saving goodness. 4) Learn to watch and predict traffic signals. Stop-and-go driving is wasteful. Useful tip. You should immediately start work on developing telepathy and telekinesis. Since this may take some time, while you are hard at work evolving your mental prowess you can use MIRT that will allow you to control traffic lights without the use of super-powers. 5) Don't circle in a parking lot. Look for a spot in the empty half of the parking lot. This is probably the one I disagree with the most. Basically, to save the environment you need to park in the empty part of the parking lot right near the entrances typically that are typically empty and usually reserved for pregnant women, elderly and the disabled. I suppose to save the environment we all need to make sacrifices, and become heartless environmentalists. I'd like to thank WikiHow folks for all these useful tips and the frigid Canadian winter that inspired this rant... COME BACK GLOBAL WARMING, COME BACK!!!! *** For those of you without sense of humor, possibly lost due to environmental damage, this is a joke Monday, January 7. 2008

Fun Extract from Microsoft ... Posted by Ilia Alshanetsky

in Stuff at

13:24

Comments (5) Trackbacks (0) Fun Extract from Microsoft Silverlight License Terms

If you bother to read the the MS Silverlight TOS you'll find this interesting bit which I found quite amusing:

"IMITATION ON AND EXCLUSION OF REMEDIES AND DAMAGES. You can recover from Microsoft and its suppliers only direct damages up to U.S. $5.00". Wow, how generous! This is then followed by: "It also applies even if Microsoft knew or should have known about the possibility of the damages." Its good to know MS legal machine is working well, best of luck up holding this in any "sane" court. Thursday, September 20. 2007

U.S. Agriculture Tax on Travel Posted by Ilia Alshanetsky

in Stuff at

10:33

Comments (7) Trackbacks (0) U.S. Agriculture Tax on Travel

I just got my confirmation for my flight to San Francisco to ZendCon happening in early October and noticed something interesting on my invoice for the flight in the "taxes area".

Taxes, Fees and Charges --------------------------------------------------- Canada Airport Improvement Fee 20.00 U.S.A Transportation Tax 15.54 U.S Agriculture Fee 5.15 Canada Security Charge 7.94 Canada Goods and Services Tax (GST/HST #10009-2287) 26.01 U.S.A Immigration User Fee 7.21 Why would an airline ticket include the U.S Agriculture Fee, is there a tax on the air above the US farmland or something? Tuesday, September 4. 2007Moving to Flickr

After a few years on Gallery 1.X, which with a few tweaks worked quite well for me, I've decided to make the transition to Flickr's pro account. The conversion was largely made possibly by a tweaked gallery2flickr script that allowed me to move albums over without loosing any data in a process, which is always a good thing. It still took some time, but in the end I am quite happy with the results. Flickr has some very neat features in comparison to Gallery such as geo-tagging, very convenient interface for tagging and labeling photos, which at least in Gallery 1.X was rather frustrating.

My new gallery can now be found at http://www.flickr.com/photos/iliaal/ Thursday, March 8. 2007

Phantom 5.0.37 MySQL Release Posted by Ilia Alshanetsky

in Stuff at

22:16

Comments (9) Trackback (1) Phantom 5.0.37 MySQL Release

I spotted Edin's blog post about PHP 4.4.6 now being linked against MySQL 5.0.36 on Windows and decided to see what is new in that release in comparison to the 5.0.33 I am currently running.

A quick visit to the MySQL's downloads page revealed a distinct absence of said release however. There are however release notes about MySQL 5.0.37, which lists some compelling fixes. Unfortunately, this release is nowhere to be found as well, even though the release notes claim it was released on 27 February 2007. A simple question comes to mind, WTF? Sunday, January 21. 2007Has O'Reilly Gone Crazy?

Sometimes the things you'll find on a bookshelves of your local book store can be quite unusual and downright weird. Consider the following super-hero themed series of books on Windows products by O'Reilly.

Wednesday, January 10. 2007

MySQL 5.0.33 Community Server Posted by Ilia Alshanetsky

in Stuff at

13:15

Comments (16) Trackbacks (0) MySQL 5.0.33 Community Server

As you may already know or soon will find out MySQL had released a new version of their community server, 5.0.33. First all congratulations to developers, any release is a lot of work and finally pushing it out the public is definitely an achievement.

There are however some interesting and in my eyes less then positive developments pertaining to this release. As you can see from Kaj's announcement as well as the state of the MySQL's download page pre-compiled binaries are no longer offered. The only files available for MySQL 5.0.33 are sources for *NIX and Windows platforms. While this is not an issue for *NIX users where lack of binaries will be resolved by distros and if not, the compiler is always available and compiling MySQL is big issue, it does pose a major problem for Windows users who generally do not have access to a C/C++ compiler. This means that all the people who develop on Win32 and then deploy on *NIX machines will need to stick to older versions of the database for the dev environments or rely on someone other then MySQL to provide binaries (which may result is less then stable, trustworthy packages going around). This also may affect adoption rates since many companies insist (and rightly so) on using same version of DB on production and development. Interestingly enough the "For maximum stability and performance, we recommend that you use the binaries we provide." statement on the download page still remains. I guess the suggestion is that if we (the users) want stability we need to go for the Enterprise edition. Friday, December 1. 2006

IE6 and IE7 Testing Simplified Posted by Ilia Alshanetsky

in PHP, Stuff at

10:15

Comments (10) Trackbacks (0) IE6 and IE7 Testing Simplified

With the release of IE7 many web developers were faced with a need to test their applications on the different versions of IE, but had no means to do so since only one IE can run on Windows. Now there were different hacks available around it, but in most instances you ended up using portion of IE7 libs for IE6 emulation and thereby not getting quite the same behavior.

Today on IE blog a much better solution was offered by Microsoft (kudos guys). Basically they've allowed Windows owners (after genuine advantage check, which now can be done via Firefox as well) to download WinXP SP2 image with IE6 and run via a free download of "Virtual PC 2004". This means you can safely upgrade you WinXP box to IE7 and run IE6 via an image, thus giving you 2 versions of IE on the same machine this minimum amount of hassle. |

Categories

|

|||||||||||||||||||||||||||||||||||||||||||||||||